【大数据系列】MapReduce示例一年之内的最高气温

一、项目采用maven构建,如下为pom.xml中引入的jar包

1 <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" 2 xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> 3 <modelVersion>4.0.0</modelVersion> 4 5 <groupId>com.slp</groupId> 6 <artifactId>HadoopDevelop</artifactId> 7 <version>0.0.1-SNAPSHOT</version> 8 <packaging>jar</packaging> 9 10 <name>HadoopDevelop</name> 11 <url>http://maven.apache.org</url> 12 <properties> 13 <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> 14 <hadoopVersion>2.8.0</hadoopVersion> 15 </properties> 16 17 <dependencies> 18 <!-- Hadoop start --> 19 <dependency> 20 <groupId>org.apache.hadoop</groupId> 21 <artifactId>hadoop-common</artifactId> 22 <version>${hadoopVersion}</version> 23 </dependency> 24 <dependency> 25 <groupId>org.apache.hadoop</groupId> 26 <artifactId>hadoop-hdfs</artifactId> 27 <version>${hadoopVersion}</version> 28 </dependency> 29 30 <dependency> 31 <groupId>org.apache.hadoop</groupId> 32 <artifactId>hadoop-mapreduce-client-core</artifactId> 33 <version>${hadoopVersion}</version> 34 </dependency> 35 <dependency> 36 <groupId>org.apache.hadoop</groupId> 37 <artifactId>hadoop-client</artifactId> 38 <version>${hadoopVersion}</version> 39 </dependency> 40 <!-- Hadoop --> 41 <dependency> 42 <groupId>jdk.tools</groupId> 43 <artifactId>jdk.tools</artifactId> 44 <version>1.8</version> 45 <scope>system</scope> 46 <systemPath>${JAVA_HOME}/lib/tools.jar</systemPath> 47 </dependency> 48 <dependency> 49 <groupId>junit</groupId> 50 <artifactId>junit</artifactId> 51 <version>3.8.1</version> 52 <scope>test</scope> 53 </dependency> 54 </dependencies> 55 </project>

二、输入文件

2014010114 2014010216 2014010317 2014010410 2014010506 2012010609 2012010732 2012010812 2012010919 2012011023 2001010116 2001010212 2001010310 2001010411 2001010529 2013010619 2013010722 2013010812 2013010929 2013011023 2008010105 2008010216 2008010337 2008010414 2008010516 2007010619 2007010712 2007010812 2007010999 2007011023 2010010114 2010010216 2010010317 2010010410 2010010506 2015010649 2015010722 2015010812 2015010999 2015011023

三、代码实现

1 package com.slp.temperature; 2 3 import java.io.IOException; 4 5 import org.apache.hadoop.conf.Configuration; 6 import org.apache.hadoop.fs.Path; 7 import org.apache.hadoop.io.IntWritable; 8 import org.apache.hadoop.io.LongWritable; 9 import org.apache.hadoop.io.Text; 10 import org.apache.hadoop.mapreduce.Job; 11 import org.apache.hadoop.mapreduce.Mapper; 12 import org.apache.hadoop.mapreduce.Reducer; 13 import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; 14 import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; 15 16 import com.slp.temperature.Temperature.TempMapper.TempReducer; 17 18 public class Temperature { 19 20 static class TempMapper extends Mapper<LongWritable, Text, Text, IntWritable>{ 21 /** 22 * 四个泛型类型分别代表 23 * KeyIn Mapper的输入数据Key ,这里是每行文字的起始位置(0,12,...) 24 * ValueIn Mapper的输入数据的Value,这里是每行文字 25 * KeyOut Mapper的输出数据的Key,这里是每行文字中的年份 26 * ValueOut Mapper的输出数据的value,这里是每行文字中的气温 27 */ 28 @Override 29 protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, IntWritable>.Context context) 30 throws IOException, InterruptedException { 31 // TODO Auto-generated method stub 32 //super.map(key, value, context); 33 //打印样本 34 System.out.println("Before Mapper : "+ key+","+value); 35 String line = value.toString(); 36 String year = line.substring(0, 4); 37 int temperature = Integer.parseInt(line.substring(8)); 38 context.write(new Text(year), new IntWritable(temperature)); 39 //map之后打印样本 40 System.out.println("After Mapper:" + new Text(year)+","+new IntWritable(temperature)); 41 } 42 /** 43 * 四个泛型类型分别代表 44 * KeyIn Mapper的输入数据Key ,这里是每行文字的年份 45 * ValueIn Mapper的输入数据的Value,这里是每行文字中的气温 46 * KeyOut Mapper的输出数据的Key,这里是不重复的年份 47 * ValueOut Mapper的输出数据的value,这里是这一年中的最高气温 48 */ 49 static class TempReducer extends Reducer<Text, IntWritable, Text, IntWritable>{ 50 51 @Override 52 protected void reduce(Text key, Iterable<IntWritable> values, 53 Reducer<Text, IntWritable, Text, IntWritable>.Context context) 54 throws IOException, InterruptedException { 55 // TODO Auto-generated method stub 56 //super.reduce(arg0, arg1, arg2); 57 int maxValue = Integer.MIN_VALUE; 58 StringBuffer sb = new StringBuffer(); 59 //取value中的最大值 60 for(IntWritable value : values){ 61 maxValue = Math.max(maxValue, value.get()); 62 sb.append(value).append(","); 63 } 64 //打印样本 65 System.out.println("Before Reduce:"+key+","+sb.toString()); 66 context.write(key, new IntWritable(maxValue)); 67 //打印样本 68 System.out.println("After Reduce : "+key+","+maxValue); 69 70 } 71 72 } 73 } 74 public static void main(String[] args) throws Exception { 75 //输入路径 76 String dst = "D:\\hadoopnode\\input\\temp.txt"; 77 //输出路径 78 String desout = "D:\\hadoopnode\\outtemp"; 79 Configuration conf = new Configuration(); 80 conf.set("fs.hdfs.impl", org.apache.hadoop.hdfs.DistributedFileSystem.class.getName()); 81 conf.set("fs.file.impl", org.apache.hadoop.fs.LocalFileSystem.class.getName()); 82 Job job = new Job(conf); 83 //如果需要打成jar运行,需要配置如下 84 job.setJarByClass(Temperature.class); 85 86 //job执行作业时输入和输出文件的路径 87 FileInputFormat.addInputPath(job, new Path(dst)); 88 FileOutputFormat.setOutputPath(job, new Path(desout)); 89 90 //指定自定义的Mapper和Reducer作为两个阶段的任务处理类 91 job.setMapperClass(TempMapper.class); 92 job.setReducerClass(TempReducer.class); 93 94 //设置最后输出结果的key和value的类型 95 job.setOutputKeyClass(Text.class); 96 job.setOutputValueClass(IntWritable.class); 97 98 //执行job直到完成 99 job.waitForCompletion(true); 100 System.out.println("Finished"); 101 } 102 }

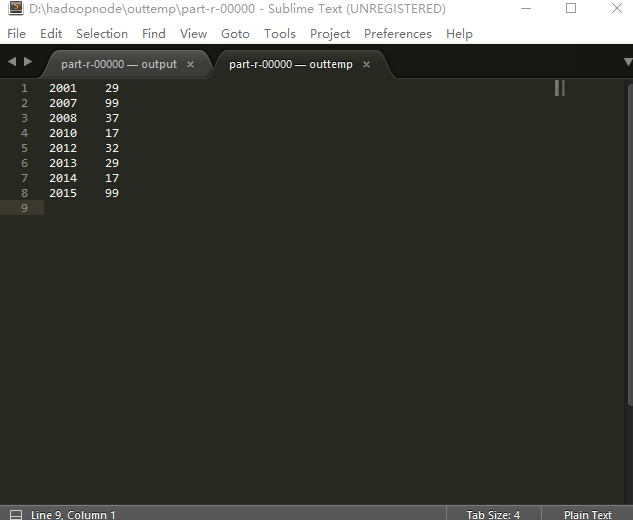

四、输出结果

Before Mapper : 0,2014010114 After Mapper:2014,14 Before Mapper : 12,2014010216 After Mapper:2014,16 Before Mapper : 24,2014010317 After Mapper:2014,17 Before Mapper : 36,2014010410 After Mapper:2014,10 Before Mapper : 48,2014010506 After Mapper:2014,6 Before Mapper : 60,2012010609 After Mapper:2012,9 Before Mapper : 72,2012010732 After Mapper:2012,32 Before Mapper : 84,2012010812 After Mapper:2012,12 Before Mapper : 96,2012010919 After Mapper:2012,19 Before Mapper : 108,2012011023 After Mapper:2012,23 Before Mapper : 120,2001010116 After Mapper:2001,16 Before Mapper : 132,2001010212 After Mapper:2001,12 Before Mapper : 144,2001010310 After Mapper:2001,10 Before Mapper : 156,2001010411 After Mapper:2001,11 Before Mapper : 168,2001010529 After Mapper:2001,29 Before Mapper : 180,2013010619 After Mapper:2013,19 Before Mapper : 192,2013010722 After Mapper:2013,22 Before Mapper : 204,2013010812 After Mapper:2013,12 Before Mapper : 216,2013010929 After Mapper:2013,29 Before Mapper : 228,2013011023 After Mapper:2013,23 Before Mapper : 240,2008010105 After Mapper:2008,5 Before Mapper : 252,2008010216 After Mapper:2008,16 Before Mapper : 264,2008010337 After Mapper:2008,37 Before Mapper : 276,2008010414 After Mapper:2008,14 Before Mapper : 288,2008010516 After Mapper:2008,16 Before Mapper : 300,2007010619 After Mapper:2007,19 Before Mapper : 312,2007010712 After Mapper:2007,12 Before Mapper : 324,2007010812 After Mapper:2007,12 Before Mapper : 336,2007010999 After Mapper:2007,99 Before Mapper : 348,2007011023 After Mapper:2007,23 Before Mapper : 360,2010010114 After Mapper:2010,14 Before Mapper : 372,2010010216 After Mapper:2010,16 Before Mapper : 384,2010010317 After Mapper:2010,17 Before Mapper : 396,2010010410 After Mapper:2010,10 Before Mapper : 408,2010010506 After Mapper:2010,6 Before Mapper : 420,2015010649 After Mapper:2015,49 Before Mapper : 432,2015010722 After Mapper:2015,22 Before Mapper : 444,2015010812 After Mapper:2015,12 Before Mapper : 456,2015010999 After Mapper:2015,99 Before Mapper : 468,2015011023 After Mapper:2015,23 Before Reduce:2001,12,10,11,29,16, After Reduce : 2001,29 Before Reduce:2007,23,19,12,12,99, After Reduce : 2007,99 Before Reduce:2008,16,14,37,16,5, After Reduce : 2008,37 Before Reduce:2010,10,6,14,16,17, After Reduce : 2010,17 Before Reduce:2012,19,12,32,9,23, After Reduce : 2012,32 Before Reduce:2013,23,29,12,22,19, After Reduce : 2013,29 Before Reduce:2014,14,6,10,17,16, After Reduce : 2014,17 Before Reduce:2015,23,49,22,12,99, After Reduce : 2015,99 Finished